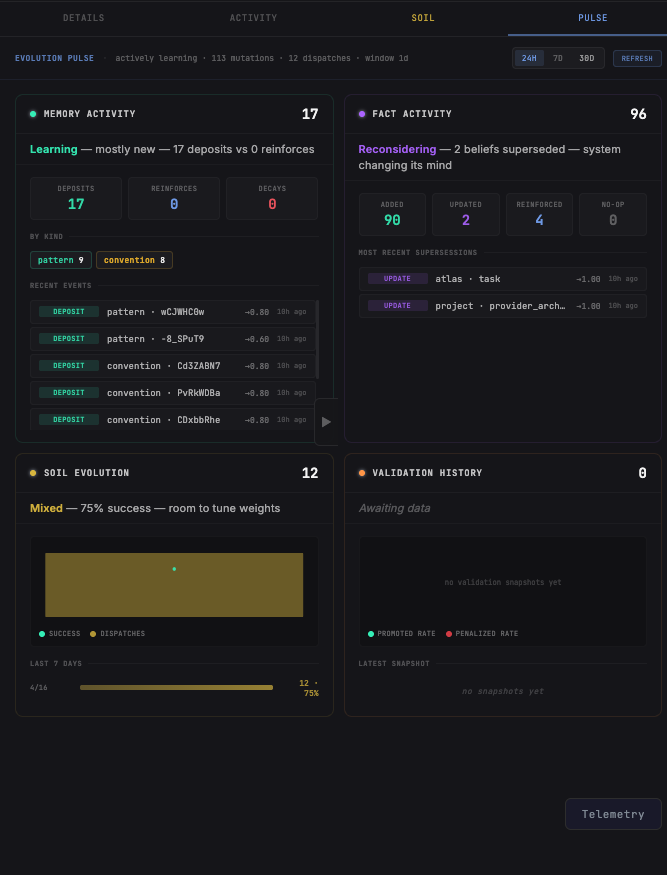

Atlas-Colosseum

Orchestration, cognition, and evolution. Three systems that work together. Atlas coordinates. SimLink thinks. Colosseum evolves. The infrastructure that makes autonomous AI systems actually work in production.

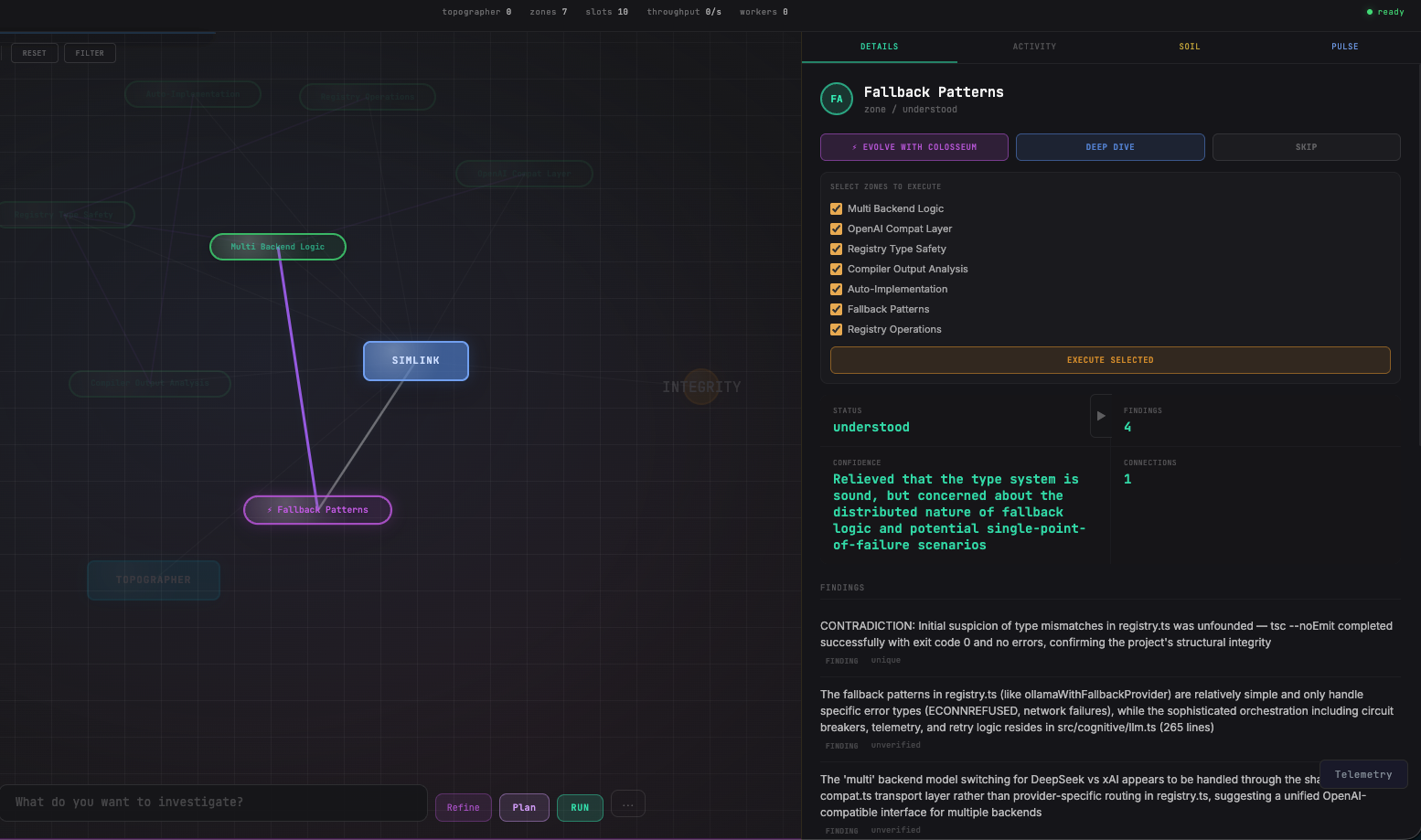

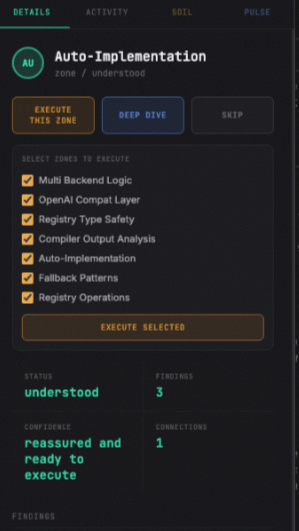

Decoding

The Mesh.

SimLink is the first investigation engine that doesn't just read code—it analyzes the architectural mesh. Using the Topographer, it identifies inquiry zones autonomously.

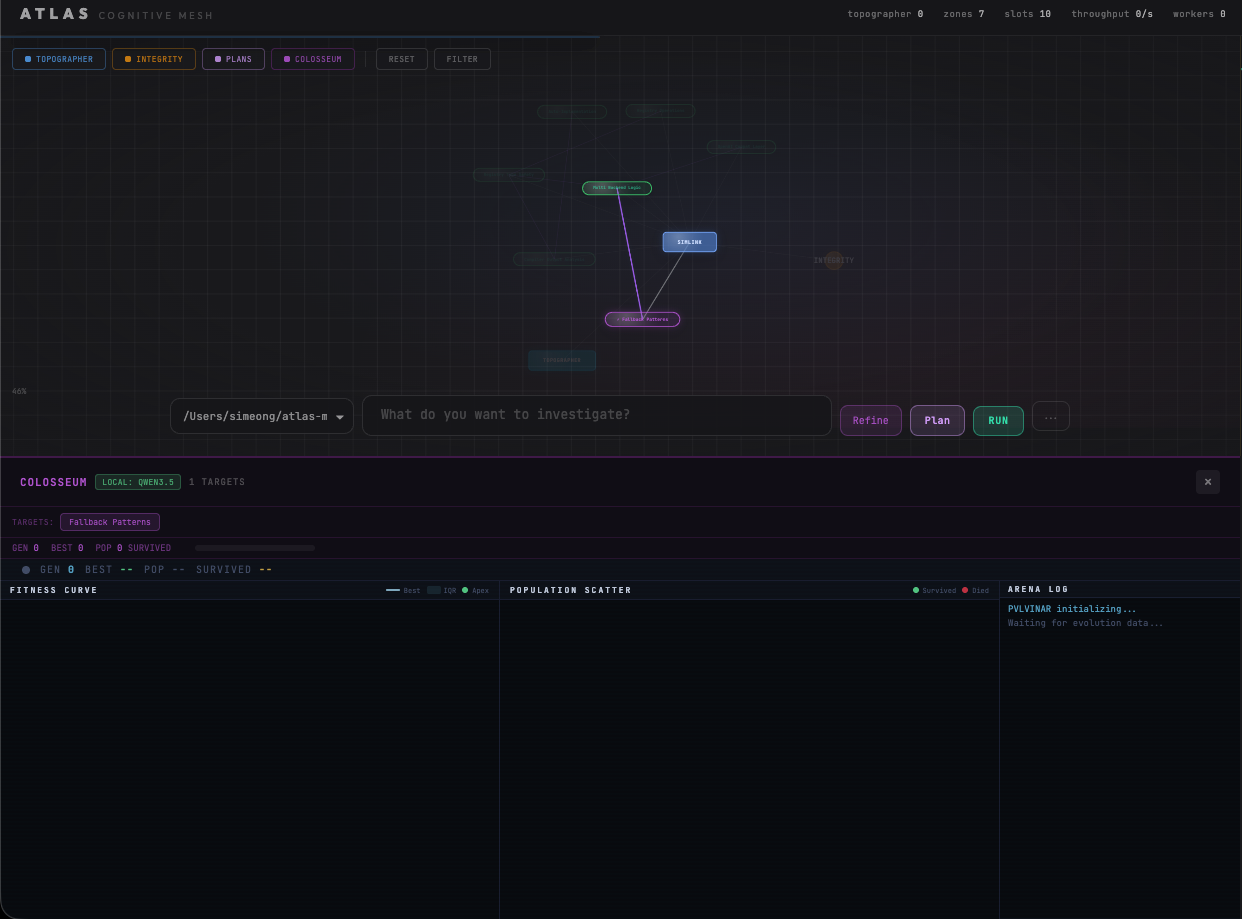

Parallel

Orchestra.

Atlas is the execution heart of the system. It breaks complex plans into a Directed Acyclic Graph (DAG) and dispatches parallel workers in isolated worktrees.

Evolutionary

Pulvinar.

When prompt engineering hits a wall, Colosseum takes over. It breeds thousands of solution variants in a WASM sandbox to find the optimal result through natural selection.